Ubiquitous computing is the embedding of computation into everyday objects and spaces so technology fades into the background. UX (user experience) designers craft seamless, context-aware experiences across devices and environments to facilitate ubiquitous computing and ensure technology stays helpful, invisible, and intuitive even as it surrounds users.

Explore how context-aware technology literally surrounds people and offers a wide range of exciting design opportunities, in this video with Alan Dix: Author of the bestselling book Human-Computer Interaction and Director of the Computational Foundry at Swansea University.

Why Ubiquitous Computing is Everywhere

“Everywhere” is the word with ubiquitous computing—often called ubicomp, pervasive computing, or ambient intelligence—as it means embedding computing into the environment so that digital services appear anytime, anywhere, using diverse devices and sensors. Think of a well-equipped modern home, with voice-activated assistants like Alexa and Google Nest home automation systems—all awaiting input from those living in it to improve their lives through sheer convenience.

The rise of the smart home mirrors the rise of the smartphone, except the former is arguably more intimate and seamless than the latter. Unlike the conscious “reach for” element of a phone, ubiquitous computing is part of the environment and users can forget devices are there. One of the keys to the power of ubiquitous computing is that devices are aware of their users’ world—or at least their immediate environment such as their home—and context. Over time, “ubi tech” becomes acquainted with user behaviors and can anticipate user needs via the “training” such ubiquitous computing devices receive from the people operating them, such as voice user interfaces (VUIs) learning their owner’s voice patterns. And context-aware systems that can anticipate user needs without explicit interaction are ones that blend digital into physical life.

Explore why designing for context-awareness makes great sense, in this video with Frank Spillers: Service Designer, Founder and CEO of Experience Dynamics.

Why Ubiquitous Computing Matters to UX Designers

Perhaps most significantly, ubicomp excels in areas where traditional computing fails and offers users and designers a distinctly modern—yet timelessly relevant—set of benefits, such as how effective ubiquitous computing UX design:

Reduces Friction

Devices anticipate needs from context and sensors, reducing explicit commands. This context awareness, seamless handoffs between devices, and integration with physical activities allows more “natural” co-existence—if not, harmony—between user and devices.

For example, a runner’s playlist can adjust based on pace, their route is tracked automatically, and workout data syncs across multiple devices without the runner’s conscious intervention. If our runner had to deliberately tap, swipe, and press on a device to execute commands, they would need to slow down or stop—a minor inconvenience that would nevertheless “counteract” benefits of what should be life-improving conveniences. This alternative—or “new way”—represents computing that augments rather than interrupts human activity.

Affords Greater Accessibility

The implications of ubicomp extend beyond convenience in other valuable ways, too, and accessible design is particularly important. Ubiquitous computing enables new forms of accessibility—voice interfaces help users with visual disabilities, for example, while gesture recognition assists those with limited mobility.

Discover how accessible designs help users with disabilities and users without, too, in this video.

Supports Ambient Interactions and Learning

Ambience and background are keywords in ubicomp, as it also creates opportunities for ambient learning and passive skill development through context-sensitive information delivery. Calm technology encourages peripheral notifications that inform without interrupting users—helpfulness that comes from sources that blend into the environment, almost as if they weren’t even there.

Enables Invisible Convenience

Speaking of not being there, along with such ambience comes an invisibility that translates across many environments, given how smart homes, healthcare monitoring, and city services become seamless via background computing.

Challenges Brands to Address Critical Ethical Design Concerns

One aspect of all this “background convenience” that can alarm users relates to its deeper implications. While this is a primary concern, designers have a golden opportunity to tailor solutions around privacy, fairness, and access, and minimize the risk of surveillance or inequality.

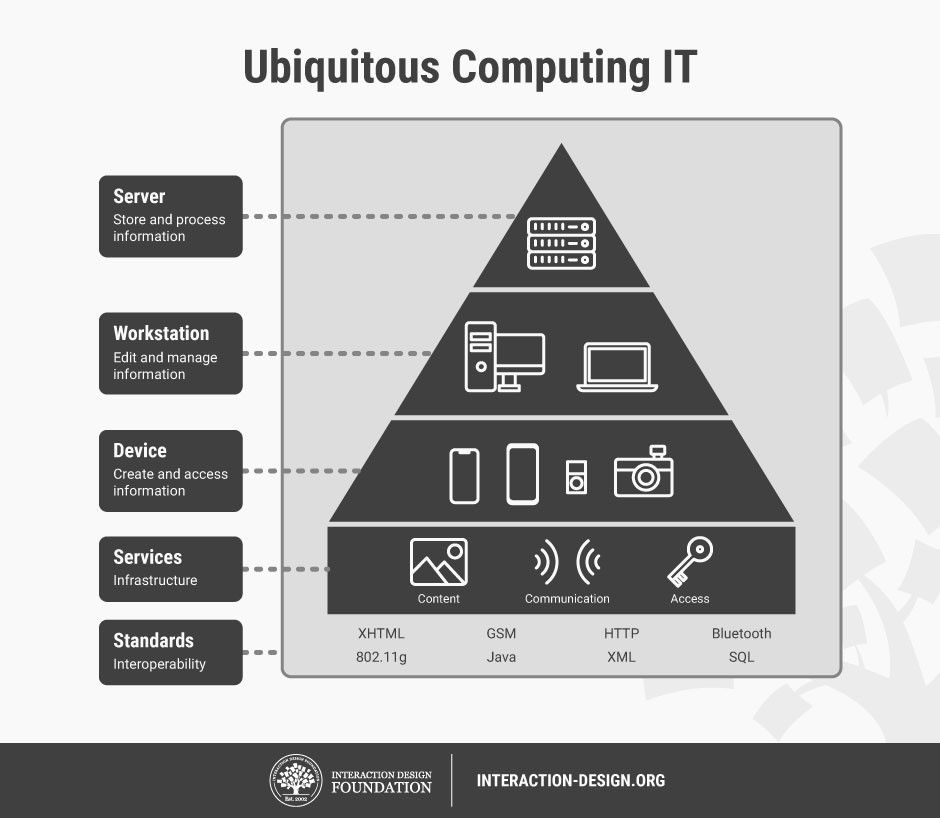

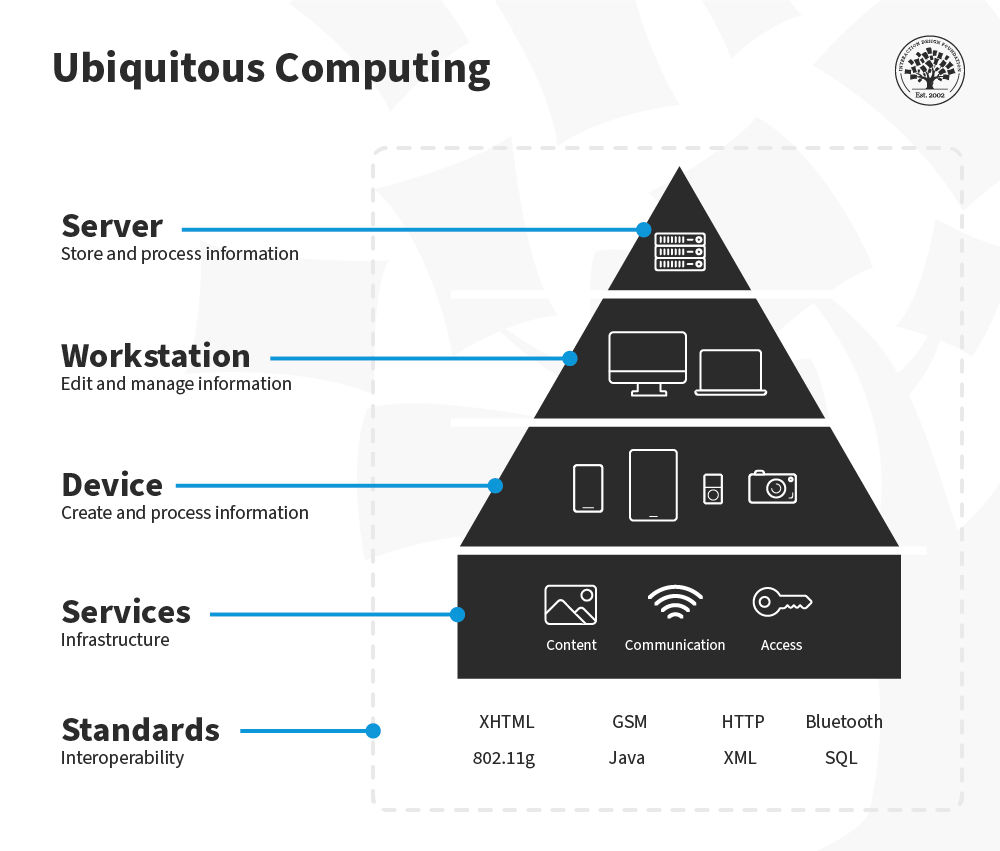

How to envision computers dissolving into everyday life—computing in clothing, furniture, and architecture, operating in the background? This high-roofed house—or pyramid on a pedestal—captures what’s going on succinctly: with the server at the top, to store and process information; the workstation below it, to edit and manage information; then the device, to create and process information; then services below that, for infrastructure; and, on the bottom, standards—for interoperability.

© Interaction Design Foundation, CC BY-SA 4.0

How to Design for Ubiquitous Computing, Step by Step

Try this suggested approach to UX design for ubiquitous computing:

1. Define Contexts of Use

First and foremost, uncover the essential knowledge you’ll need via user research—to start, map where and how users will encounter sensors, devices, or ambient interfaces. For example, if it’s a home assistant that can monitor and control devices and systems like central heating, stoves and ovens, and lighting, interactions will mostly occur in the home but also via remote control (via a smartphone, for example) from afar. Consider physical environments, routines, and sensory inputs—for example, busy users may be rushing around their home saying commands from various rooms, at various volumes.

Explore how to design better for user behavior and user needs through users’ contexts of use, in this video with Alan Dix.

2. Specify User Needs and Goals

Flesh out your user research and conduct contextual interviews, diary studies, and observation in real settings. Ethnographic research techniques particularly empower you to find out from users what matters to them, why, and how you might be able to deliver design solutions that deliver on the most promising ways to delight them. In particular, cultural probes can help access the level where users can provide the most accurate insights into their lived realities.

Identify tasks users perform—especially unnoticed ones—that technology could support. For example, for our home assistant, a user might prefer Saturdays for their main laundry day—a notification from your design could gently remind them to wash their clothes on Saturday mornings.

Consider how cultural probes can uncover valuable insights to inform your design decisions for the better, in this video with Alan Dix.

3. Decide Interaction Modalities

Mainly, you’ll want to design for calm interactions and notifications in the periphery, so balance informing users without capturing full focus. Use subtle cues: ambient light, gentle audio, or haptic signals—and shift contact only when it’s necessary or urgent.

For example, if a user is baking a cake and their front door sensor indicates they’re leaving the property, their smart home assistant that controls cooking devices could send them an alert.

Blend voice, gesture, ambient light, spatial audio, or tangible interfaces as appropriate.

Discover how and when to harness the power of haptics to boost your designed product’s effectiveness, in this video with Alan Dix.

4. Prioritize Context Awareness

Context deserves more than a single category on this list, since it’s something to keep being aware of. Combine sensors—such as motion, location, biometric—to infer user state, while preserving privacy of users. Keep context inference simple and transparent; users will trust designs that make the effort to understand the complexities of what’s going on and respect them, too.

5. Prototype in the Wild

Build prototypes in real-world settings (“in the wild”) to test usability beyond lab conditions. You want your prototypes to meet the users in their environments and contexts of use—the only way to assess their value. Use low-fidelity physical sensors or mock artifacts to simulate invisibility and context adaptation.

Peer into the potential that prototyping offers so you can make better design choices from more informed testing results, in this video with Alan Dix.

6. Evaluate Experience Holistically

Because ubicomp weaves so intricately into users’ lives, you’ll want to assess beyond what they get done with it. So, measure not just task completion but sleep, cognitive load, attention flow, trust, and user satisfaction.

Use attention-management frameworks to design for when interruptions occur—for they will; that’s the nature of human living. It’s vital to ground design in real settings, hence why you’re testing in the field and not relying on assumptions or lab-only observations. Field deployments expose hidden usability issues and friction in real lighting, noise, and social settings.

7. Iterate with Ethical Safeguards

The “price” of modern living can seem extreme to many people, especially when it comes to their homes—their “sanctuary” where they should feel they can drop their guard and be themselves in full. So, include privacy controls and transparency about sensing and data use. When users have full control over what their homes hear, register, and report, they’ll more likely trust your brand and dispel fears of tech totalitarianism and device eavesdropping. As this is such a potentially serious issue, here’s a quick checklist:

Always inform users what your designed system is sensing and why.

Secure data streams, allow anonymization, and support user consent.

Apply fairness frameworks to avoid biased behavior or exclusion.

8. Deploy Thoughtfully

Ensure systems degrade gracefully if sensors fail or connectivity drops and offer fallback experiences or manual modes. For example, a default for a front or garage door should be to lock or close secured in the event of a power or system fail; otherwise, the user might be wide open to opportunistic burglars. Assure users with notifications that their property is safe and secure following such safeguard measures. For example, our homeowner may be on vacation in another country and—if their video monitoring system can’t show them a lowered garage door or it’s registered a problem—a notification to (accurately) say there’s nothing to worry about might mean the difference between peace of mind and a ruined trip.

Also beware of information overload: too many ambient signals or too much information coming at once can overwhelm users. Always craft experiences that filter notifications by relevance and timing.

9. Plan for Interoperability

Systems should operate across devices and vendors—for example, your smart home assistant may need to work with other apps, monitoring, law enforcement, and other services. Ensure protocols and interfaces work smoothly across environments.

Users’ homes can recognize and help them in ways that offer security and convenience—for example, for users who carry shopping bags in both hands, there’s no need to fumble with a pocket for keys when fingerprint entry is so handy.

© WREO, Fair use

How Did Computers Become So Ingrained in Everyday Life?

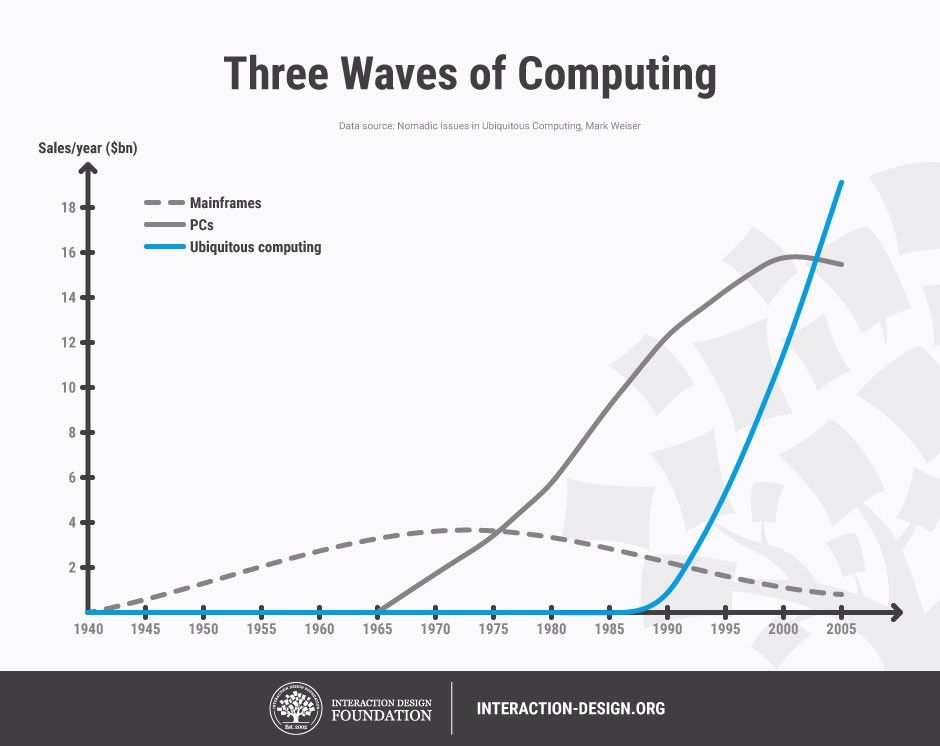

Ubiquitous computing might sound almost dramatic to some people, as if to suggest a new kind of world (the digital) might rise to challenge the old (the “analogue”). However, and for all the artificial intelligence (AI) at work, it’s more of a natural progression. Xerox PARC researcher Mark Weiser articulated the concept in the early 1990s, envisioning a world where computing would become so seamlessly integrated into human environments that it would essentially disappear from conscious awareness. Roy Want and colleagues at PARC implemented early ubicomp in systems like Active Badge and PARCTab (1990s), enabling indoor localization and context-aware behaviors long before smartphones became what they are today.

“The Ubiquitous Computing era will have lots of computers sharing each of us. Some of these computers will be the hundreds we may access in the course of a few minutes of Internet browsing. Others will be imbedded in walls, chairs, clothing, light switches, cars – in everything. UC is fundamentally characterized by the connection of things in the world with computation.”

— Mark Weiser & John Seely Brown, 1996

Where the paradigm of “everywhere computing” might have once seemed like science fiction to some observers, it has gradually evolved from theoretical framework to lived reality—and modern design expectations. The future is “here,” surrounding users every day and fundamentally challenging the desktop metaphor that dominated personal computing for decades.

To put ubicomp into perspective, consider its “predecessor.” The traditional computing model—with its characteristics of Windows, Icons, Menus, and Pointers (WIMP)—emerged when computers were expensive, stationary machines that needed dedicated attention and explicit interaction. At home or at the office, users sat at desks, manipulated cursors, and consciously engaged with applications through hierarchical menu systems. This model served well when computing was a distinct activity. However, it’s too “deliberate” for more intimate aspects of daily life as digital capabilities permeate almost every facet of twenty-first-century living.

.jpg)

Ubicomp represents the kind of evolutionary step designers can stay ahead of with exciting, relevant, and respectful digital solutions to help users enjoy modern living in its many forms.

© Interaction Design Foundation, CC BY-SA 4.0

Technological advancements and convergences helped bring about the rise of ubiquitous computing. Moore’s Law empowered processors to become small and cheap enough to embed in everyday objects. Wireless networking freed devices from physical tethers and cabled existence, while advances in sensors, displays, and battery technology made always-on, context-aware computing feasible. Maybe most crucially, the internet provided the infrastructure for these distributed devices to communicate and coordinate.

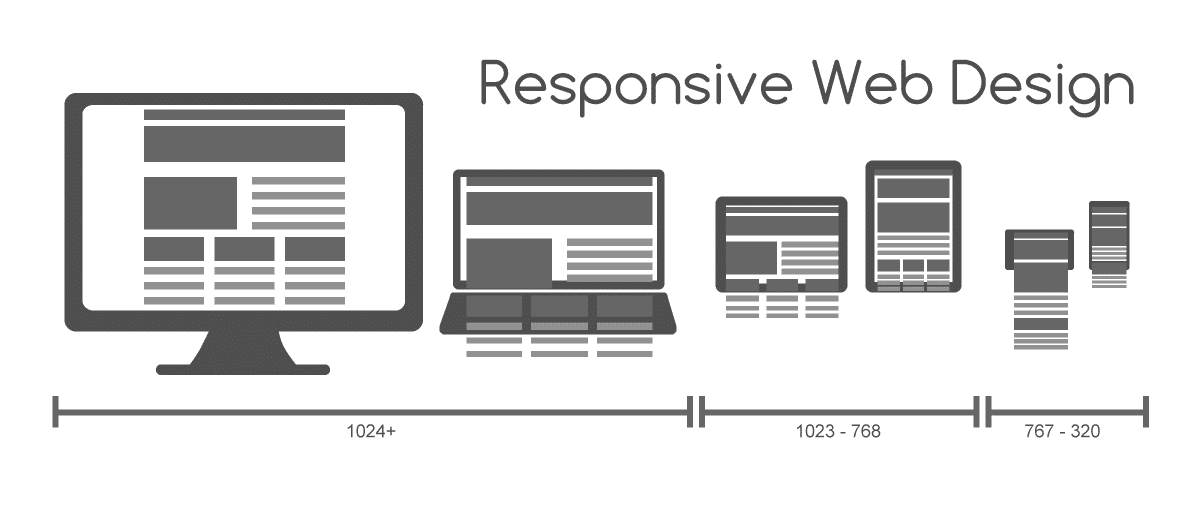

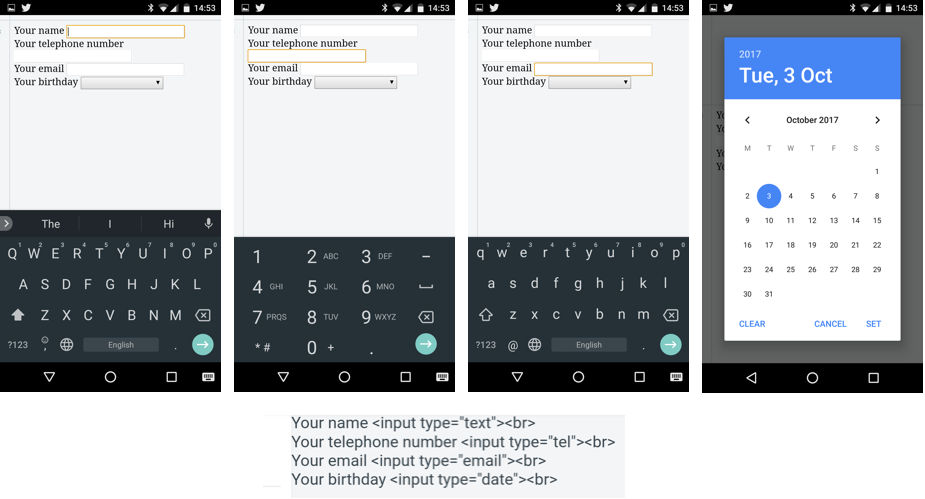

The smartphone revolution marked ubiquitous computing’s first major victory over traditional desktop paradigms. Instead of needing to sit at workstations to get anywhere online, users were suddenly able to carry powerful computers that respond to touch, voice, and gesture. With the prevalence of smart mobile phones and tablets, so too came the need for dedicated mobile UX design to cater to these unique experience types. Mobile devices don’t just replicate desktop functionality, and design for them certainly isn’t about shrinking desktop-tailored experiences. Mobiles enable entirely new interaction models based on location awareness, biometric authentication, and ambient intelligence.

Smart homes showcase ubicomp’s maturation in many ways:

Thermostats learn occupancy patterns and adjust temperature automatically.

Voice assistants embedded in speakers, displays, and appliances respond to natural language commands without requiring traditional input devices.

Lighting systems adapt to circadian rhythms.

Security cameras use computer vision to distinguish between family members and strangers.

To a great extent, these systems abandon windowed interfaces in favor of ambient feedback, voice interaction, and automated responses.

Wearable technology further demonstrates this shift. Fitness trackers monitor physiological data continuously, providing insights without demanding active user engagement. Smartwatches deliver notifications through haptic feedback and micro-interactions that would be cumbersome on traditional computers. Medical devices like continuous glucose monitors and pacemakers perform life-critical computing entirely in the background. Even while asleep, users might rest easier knowing they’re being watched over—not necessarily surveilled.

The Internet of Things (IOT) has expanded this computing fabric into vehicles, retail environments, and public infrastructure. Modern cars incorporate dozens of computers that manage everything from engine performance to entertainment, often accessible through touch screens and voice commands instead of traditional interfaces. Retail environments use RFID (radio frequency identification) tags, mobile payments, and recommendation algorithms to create shopping experiences that barely resemble the explicit transaction models of earlier e-commerce.

Thanks to such advances, interconnectivity, and machine learning, modern society is set up to know more—and help more—via ethically designed software and technology, aware of what to monitor and how to respond.

Explore how machine learning features at so many levels in modern UX design and society, in our video.

Special Considerations for Ubiquitous Computing Design

Given the more personal and intimate nature of technology that seamlessly dissolves into the user’s life, environment, and lifestyle, consider these design responsibilities and potential hazards in particular.

Fairness and Inclusion

When designing personalized experiences, make sure the system doesn’t reinforce stereotypes or unfair biases. Collect data from a wide range of users and test your designs across diverse groups. This helps ensure your product works equally well for everyone, respects different needs, and treats all users with dignity.

Cultural and Social Norms

Different environments have different expectations, a reality that extends across many nations and societies around the world. Social acceptability, cultural sensitivities, and local norms shape whether ambient cues feel appropriate, so be sure to factor that into design choices.

Discover how to design with culture in mind, in this video with Alan Dix.

Regulatory Context

Data-centered systems in public spaces may be subject to laws like GDPR or HIPAA, for example, for medical records. Designers must align data practices with regulations to protect and preserve users’ data integrity.

Overall, ubiquitous computing is here to stay, spread, and evolve—and, when ethically designed, improve many standards of modern living further as well. Conscientious designers can mindfully embed intelligence into everyday life and push the benefits for users to higher levels while respecting the eternal need for respecting individuals’ rights and dignity.

From household name brands that offer users better options to manage their home lives to sensor networks that help with traffic prediction and city planning, ubicomp represents great possibilities and responsibilities. It can make a great deal possible for many people—be they elderly users who need medication reminders or fall monitoring, single parents managing hectic lives, or businesses managing the spaces where great things happen. Ubiquitous computing can help prevent fires, keep family commitments running smoothly, aid in sleep—the list is long and growing as further digital applications weave successfully into users’ lives and living spaces.

As artificial intelligence improves and hardware continues miniaturizing, the world will continue to witness the most profound technologies disappearing into the fabric of everyday life—making people more capable without making us more conscious of the computational infrastructure supporting our activities. One thing in particular for designers not to forget on this journey into the future is: for a goal, keep the user in control—and help them stay empowered.