Human-robot interaction (HRI) is the field that studies and designs how people and robots communicate, collaborate, and live together. It involves creating robots that can detect, interpret, and model human actions, signals, and intentions well enough to interact effectively, respond in ways that are consistent with their programmed goals and the user’s context, within the limits of their sensing and decision-making, and operate safely in human environments. In HRI as a UX (user experience) designer, you shape how machines behave around people, how they signal intention, interpret human behavior, and build trust, so robots enhance human activities without friction or danger.

In this video, William Hudson, User Experience Strategist and Founder of Syntagm Ltd., explains how the Fourth Industrial Revolution creates a deeply connected technological world that sets the stage for advanced human-robot interaction.

Is a Robot what You Think It Is?

A robot is a programmable machine that’s capable of carrying out physical tasks or actions automatically, often with some degree of autonomy. Robots can sense their environment, process information, and respond through movement or behavior, either pre-programmed or adaptive. They may look mechanical, humanoid, or entirely utilitarian, but their core function is to perform work, which can be physical, cognitive, or interactive, without needing constant human control. To qualify as a robot, a system must include both a computational (digital) component and a physical or embodied component that acts in the real world. One notable example is Boston Dynamics’ Spot, a four-legged robot used for inspections, construction sites, public-safety applications, and much more. Another is sure to be a household name, too: a Roomba robot vacuum cleaner.

Robots have thrived in the popular imagination ever since Czech writer Karel Čapek coined the term in a 1920 science fiction play. The Czech word “robota” means forced labor or drudgery. In 1954, George Devol invented the first programmable robot, called “Unimate,” and patented it as a “Programmed Article Transfer” machine. Designed for industrial tasks, Unimate could move parts and tools. Early robots soon emerged in the automotive, electronics, and aerospace industries, to automate repetitive or dangerous tasks, increase precision and efficiency, and reduce labor costs in manufacturing.

Advances in robotics by the 2020s have taken things to higher levels. Modern robots exist to support humans, not just to replace labor, but to enhance safety, accessibility, efficiency, and even quality of life, too. Their purpose is increasingly shaped by ethical, human-centered design goals as much as by technological capability. As a robot designer, or designer of robots, you’ll be responsible for ensuring that robots help rather than harm. And when you design robots that serve users and improve their lives, you can realize the envisionment of what robotics is all about. In an era of the Internet of Things and of calm computing, it’s an age when the number of computer-controlled devices in people’s homes and on their persons has risen such that technology really is all around so many users. Rather fittingly, it’s also a perfect time for considerate and effective HRI UX design to help improve countless lives everywhere.

In this video, Alan Dix, Author of the bestselling book “Human-Computer Interaction” and Director of the Computational Foundry at Swansea University, helps you recognize how everyday devices contain numerous embedded computers, giving you insight into the complex, technology-rich contexts that robots must navigate safely and supportively.

How to Design for Human-Robot Interaction, Steps & Best Practices

To create effective and humane human-robot interaction, you’ll need a structured, user-centered, and safety-aware process. Here are key steps and design practices.

1. Understand the Human Context, Goals, and Environment

Start by defining who’ll interact with the robot, under what conditions, and for what purpose. Ask yourself:

Who are the users: Their abilities, needs, comfort levels, and expectations?

Where will the interaction happen: Home, workplace, hospital, public space?

What tasks or functions should the robot fulfill: Assistance, collaboration, caregiving, entertainment, mobility, or monitoring?

When you understand the users’ context and goals, you’ll be better able to choose appropriate interaction styles. For instance, a robot in a hospital must prioritize safety and clarity; a companion robot for elders must respect emotional comfort and social cues. HRI is inherently interdisciplinary, including robotics, design, psychology, and ethics: all of these matter and need to come together if you’re going to create robots that truly help people.

In this video, Alan Dix demonstrates how attending to users’ bodies, surrounding actors, environmental conditions, and past events helps you design interactions that truly fit people’s needs, reinforcing why HRI must look beyond the robot’s interface to the full human context.

2. Choose Suitable Interaction Modalities (Speech, Gesture, Sensors, Physical Feedback)

Robots can communicate using many channels beyond traditional screens, so use modalities that match human expectations and their environment:

Speech or natural language for intuitive commands or conversation.

Gesture, posture, or movement detection: humans signal intent with body language; robots can sometimes recognize and interpret these cues using vision and sensor systems, although accuracy depends on the environment, training data, and task.

Sensors, vision, proximity, force/tactile feedback to detect human presence, motion, or physical interaction.

Haptic or tactile feedback to give users physical sense of robot intent or actions.

By combining multiple channels (multimodal interaction), you increase flexibility and make interaction more natural and accessible between user and robot.

In this video, Alan Dix shows you how different forms of haptic and tactile feedback can shape interaction, highlighting when touch-based modalities succeed or fail.

3. Ensure Clarity, Predictability, and Transparency in Robot Behavior

People need to understand what the robot intends to do and when and why it will do it; otherwise, interaction will feel unpredictable or unsafe. That’s a perfectly natural concern. People are “hard-wired” to suddenly become afraid in the face of uncertainty, and while a robot may not be a bear or tiger, a human mind in self-preservation mode can quickly conjure fears of a merciless machine advancing and not listening to their pleas for reason. To address that, design the robot to:

Signal its status and intentions clearly, such as with lights, sounds, or movement cues, before acting.

Behave in predictable ways: avoid sudden, surprising motions.

Provide consistent feedback when actions succeed or fail; let users know what’s happening.

Design guidelines for HRI emphasize “understandability” and “predictability” as being foundational to safe, effective interaction. Another design concern you’ll need to consider alongside that is how the users’ culture can influence how they might take to a robot’s design and behavior.

In this video, Alan Dix shows you how cultural differences shape how people interpret interface cues, reminding you that clear and predictable behavior depends on designs that make sense across cultures.

4. Balance Autonomy and Human Control: Design for Shared Agency

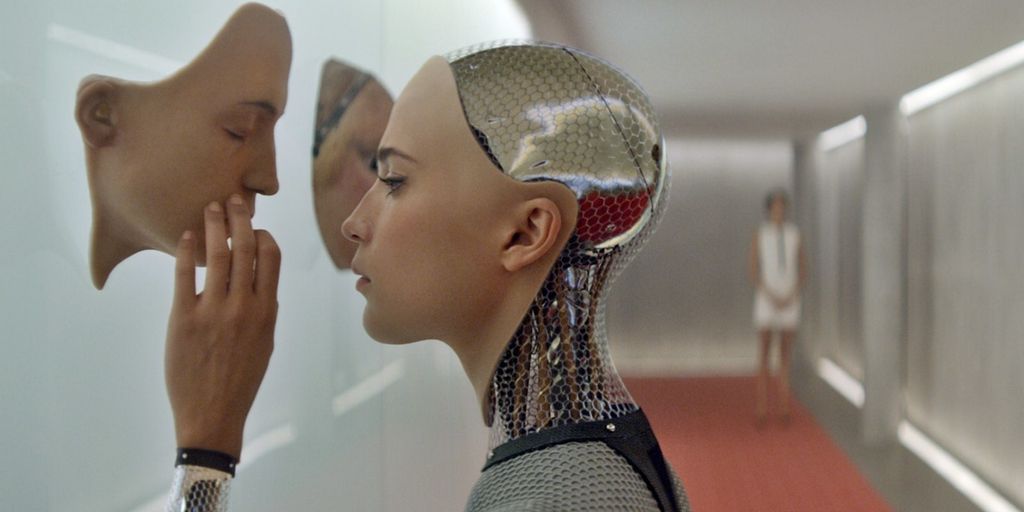

Robots often operate with some level of autonomy, but giving robots full control may make humans feel disempowered or unsafe. Not only for HRI designers, it’s also a massive concern that’s become stamped into the popular imagination, given how it’s a subject that drives science fiction books and movies set in future dystopian settings. So, instead, aim for shared control or collaborative autonomy:

Define clear roles: When the human leads, when the robot leads, and how control shifts.

Let humans override robot decisions easily or intervene when needed.

Adapt automation level to context, so there’s high autonomy when it’s safe and beneficial, and human-in-the-loop whenever uncertainty or risk exists.

This approach, sometimes called “adaptive collaborative control,” treats human and robot as partners, not master and tool. It calls for you to keep a firm grasp of empathy for users as you build foundations on which robots interacting with humans can stand, succeed, and help rather than fall, fail, and hurt.

Discover helpful insights about the essential nature of designer-user empathy as this video shows you how empathetic design helps systems support user goals clearly and calmly: a principle that also guides how humans and robots should share control.

5. Design for Learning, Adaptation, and Personalized Behavior

Robots should adapt to human behavior, preferences, and their environment over time, which involves:

Using sensors and machine learning to detect patterns, user habits, and environmental changes.

Allowing the robot to learn and refine behavior: for example, adjusting assistance based on user comfort or past interactions.

Permitting personalization, where users should be able to set preferences, boundaries, or levels of assistance.

A human-centered evaluation, which combines qualitative (user comfort, acceptance) and quantitative (task performance, safety) metrics, helps you iterate design for better alignment with human needs.

Explore the possibilities as this video explains how machine learning enables systems to learn from data and improve over time: a foundation for robots that adapt to users and environments.

6. Test in Realistic, Human-Inhabited Environments, and Iterate Continuously

Since human-robot interaction involves unpredictable real-world factors, with people, spaces, noise, movement, and social norms, you’ll have to test prototypes outside the lab, in realistic settings. So:

Use observational studies with real users to discover pain points, misunderstandings, or safety risks.

Evaluate usability, comfort, trust, safety, and acceptability over time; long-term use reveals issues lab tests might miss.

Involve interdisciplinary feedback, with designers, engineers, psychologists, and end users, to refine both robot behavior and interaction model.

Remember, at the core of this process is the idea that you’ll ensure robots fit real human life, not just ideal scenarios. That’s one reason designing with personas, research-based representations of real users, is essential.

In this video, William Hudson, shows you why persona stories, grounded in real user research, lead to designs that fit actual human needs rather than idealized assumptions.

Why Human-Robot Interaction Matters

Designing good HRI unlocks major benefits and transforms robots from mere tools into cooperative, helpful agents that enhance human life and capabilities. More specifically, the benefits of human-robot interaction design done well include:

Robots Extend Human Ability, Safety, and Productivity

With thoughtful and effective HRI design, robots can assist with tasks that are repetitive, dangerous, physically demanding, or require high precision. For example, in industries, robots and humans can work side by side and share tasks safely in collaborative settings. And in healthcare, elder care, rehabilitation, or caregiving, robots can support mobility, daily tasks, or social interaction and enhance the quality of life and independence for many.

Interaction Feels Natural and Intuitive: Reduces Friction and Learning Curve

Robots designed with strong human-centered HRI principles are more likely to feel intuitive and comfortable to users, reducing (but not eliminating) feelings of awkwardness or alienness. With speech, gesture, or natural feedback, people can engage without steep learning curves. That ease of use fosters acceptance, trust, and more widespread adoption.

This natural interaction lowers barriers for diverse user groups, including people unfamiliar with robotics, elderly users, or individuals with limited mobility. Accessible design can meet HRI design in the form of robots that help users with disabilities, and also any user who can register their requests and commands through a variety of ways.

Pick up powerful points to design with, as this video explains how accessibility principles ensure that interactions are usable and intuitive for people with diverse abilities.

Enables Collaborative Autonomy and Flexible Partnerships

HRI allows a shift away from rigid, pre-programmed robots toward dynamic partners that adjust to human rhythm, habits, and context. That collaborative autonomy, where robot adapts to user behavior and environment, opens possibilities for more fluid, efficient human-robot teams across many domains: manufacturing, home, services, and public spaces.

Supports Social, Emotional, and Inclusive Applications

Beyond pure functionality, human-robot interaction can address social and emotional needs. Robots that can recognize certain social and emotional signals (like facial expressions, tone of voice, or proximity) and respond with socially appropriate behaviors in well-defined contexts can serve in roles such as companionship, therapy, or customer service. They can help bridge accessibility gaps for people with disabilities, or support mental-health and social needs. Many people may feel emotional or psychological benefits from helpful robots once trust is established, though reactions will vary widely by person, culture, and context.

Overall, human-robot interaction encompasses a fascinating and increasingly relevant set of design challenges. It compels you to think beyond screens, buttons, or classical “interfaces.” It asks you to imagine robots not as tools but as partners: embodied, adaptive, empathetic agents that understand humans, act safely, and integrate naturally into daily life. And it promises to become even more exciting and opportunity-filled as some long-imagined robotic capabilities become practical, while many others remain speculative or long-term research goals.

Regarding how users and designers think about safety, autonomy, and trust in human-robot interaction, the core challenges are as much human as they are technical. And when you design HRI thoughtfully, with respect for human context, safety, dignity, emotion, and trust, you’ll help build a future where robots extend our capabilities, support our well-being, and collaborate with us in meaningful, human-centered ways. Excellence in HRI demands humility, care, and responsibility and the need to design for transparency, control, ethics, and real human lives.

Above all, in a world where humans and robots live and exist, it’s the humans who’ll need the primary focus. That’s why HRI UX design will always demand designers to look beyond the “nuts and bolts” of HRI design and keep a clear view of why people, with all their quirks and “irregularities,” choose to share a world with robots, who must cater to those human traits with all the insight and care designers can program into them. Their duty of care to the humans they serve becomes your duty of care to get the design right, long before it steps out into the world and does things your brand will be accountable for.

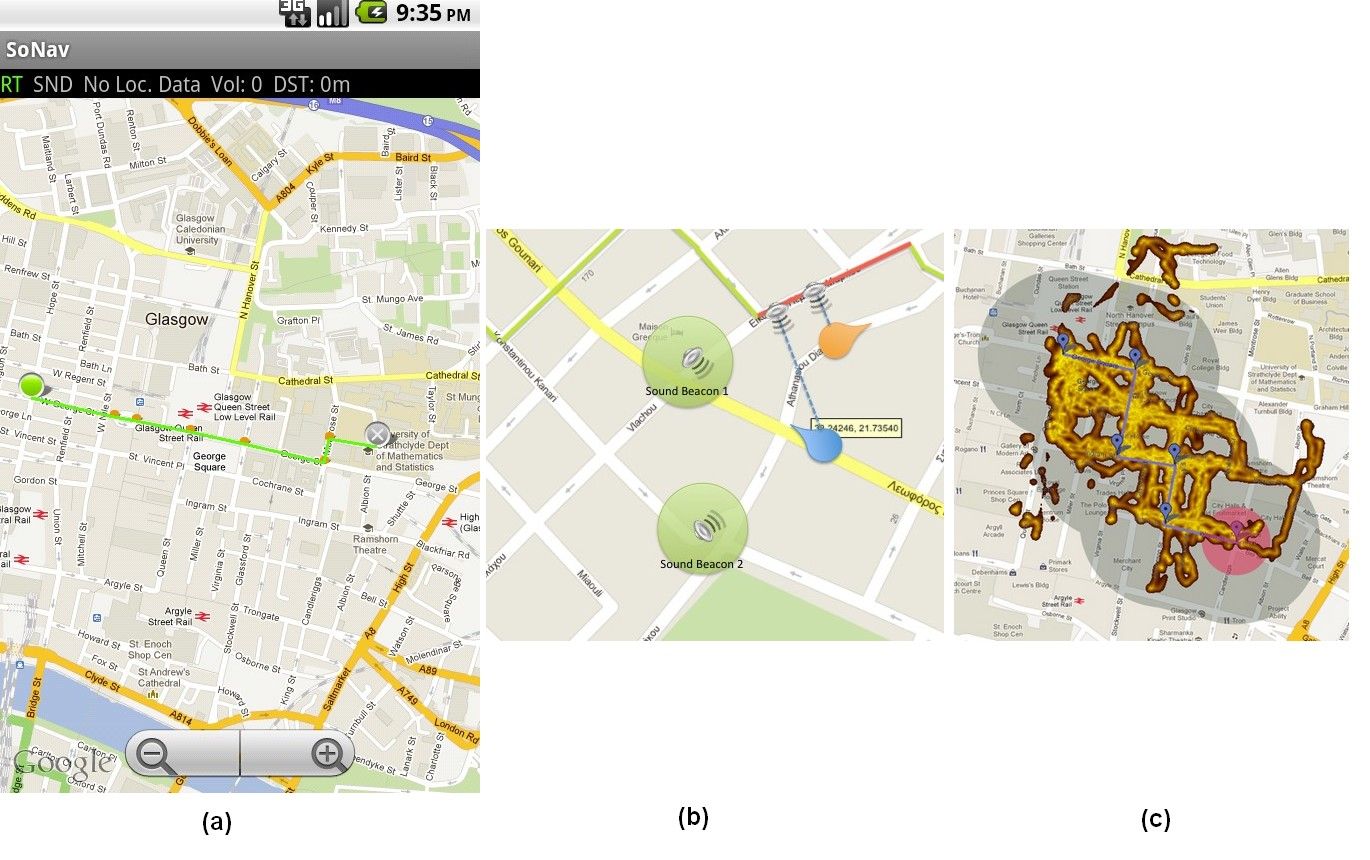

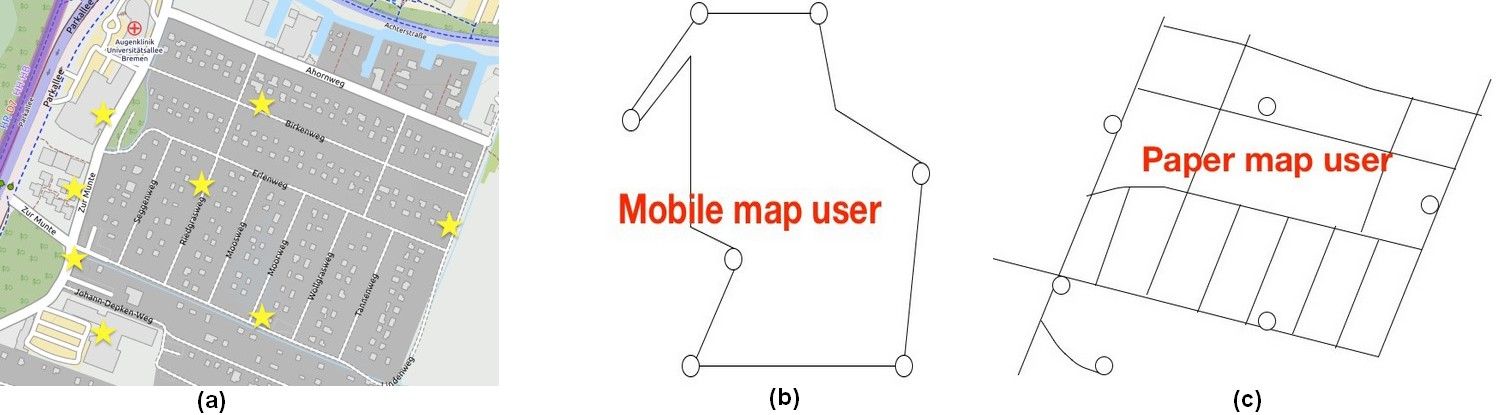

These are the dangers of UI-interaction in mobile maps, as shown by Katharine Willis et al. (2008). Learning an area and its landmarks (a) using a mobile map (b), vs. using a paper map (c): Mobile users tend to focus on the routes between landmarks, while using a paper map gives a better understanding of the whole area.

These are the dangers of UI-interaction in mobile maps, as shown by Katharine Willis et al. (2008). Learning an area and its landmarks (a) using a mobile map (b), vs. using a paper map (c): Mobile users tend to focus on the routes between landmarks, while using a paper map gives a better understanding of the whole area.