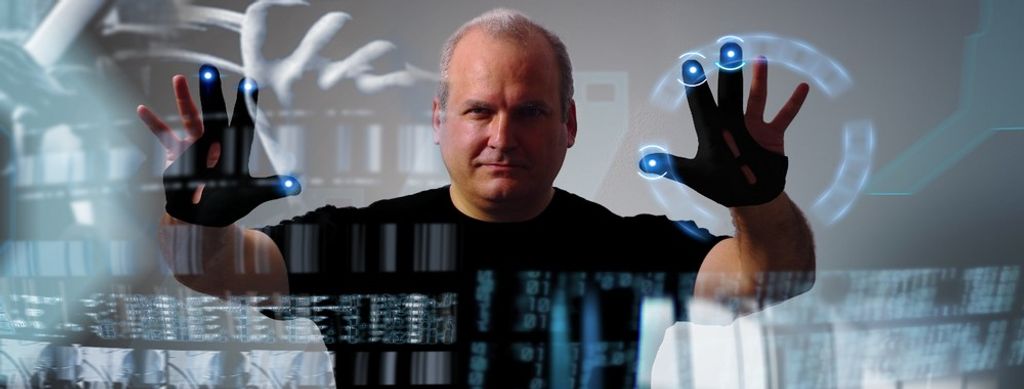

Gesture-based interaction is how users control digital systems using physical movements, such as hand swipes, pinches, or body gestures, instead of relying solely on taps, clicks, or keyboards. As a UX (user experience) designer, you can use gesture-based interaction to make interfaces feel intuitive, natural, and more human to users, empowering them to act as they would in the physical world, not just on a screen.

In this video, Frank Spillers, Service Designer, Founder and CEO of Experience Dynamics, shows you how simple, natural gestures can improve immersive interfaces while helping you avoid user fatigue.

How to Design Gesture-Based Interaction, Step by Step and Best Practices

Let’s start with a fun exercise to help envision the possibilities of designing gesture interactions. Imagine you’ve traveled into the future and find a hologram of a restaurant kitchen in front of you. According to the growling in your stomach, your “mission” is to order a pizza. And you can select a variety of “functions” to build your ideal culinary creation, like draw a circle with your finger to outline the size and then reach for and “lift” holographic toppings from the many trays on the “counter” and sprinkle as much on as you like.

The novelty of this may strike you as being like (VR) virtual reality, but you quickly notice you’re not wearing a headset. Interesting, but there’s more; for your pizza, you find you can indicate the thickness, or thinness, of the crust with a simple pinch of the space between your finger and thumb. Parmesan cheese? Just shake it on. And how soft or “fire-kissed” do you want your pizza? You might turn a temperature dial or use a motion like you would with garden shears to indicate oven-baked heat from a bellows (notice you’ve got two ways to do it). You instinctively know tapping your wrist twice means you want it as soon as possible. The holographic pizza changes color to indicate your choices are set, awaiting your final approval. A simple thumbs-up from you and it’s on its way (maybe via a food-grade 3D printer).

Enjoy this video, which explains how virtual reality evolved into the immersive medium that inspires today’s gesture-driven interactions.

While some aspects of pizza-making and ordering like this already exist, consider how intuitive and fun it might be to engage with some future technology like the above example. You can engage in an activity in a natural way where you don’t even realize you’re doing any “work”; for your pizza order, for example, you didn’t have to type anything (not even a credit card number; maybe you’ll have an account on file). Welcome to the world of gesture-based interaction, where you can shift from traditional UI (user interface) thinking with its buttons, menus, and forms, towards designing for movement, space, and embodied communication. This approach takes care, diligent testing, foresight, and empathy for users, so here’s a process to use as your guide:

1. Understand your Users, Context, and Tasks, then Ask: “Is Gesture Appropriate Here?”

You’ll need to begin with a solid foundation of who it’s all for, why they’ll adopt and love using it, and how they’ll perform tasks, achieve goals, and enjoy truly seamless experiences. So, start every project by clarifying who will use your interface, where, and for what purpose, and ask:

Will users benefit from fluid motion instead of precise taps?

Will they have free limbs (hands, arms, body) or have something constraining them (such as if they’re on a bus, carrying things, or out in public)?

Does the task involve spatial manipulation, navigation, or natural mapping (like swiping, grabbing, rotating)?

Use gesture-based interaction when it fits natural human behavior and the user’s environment. For example, in augmented reality (AR), immersive experiences, or hands-free contexts, such as smart homes, TV, and public kiosks, gestures often feel more natural than, for instance, typing. However, when tasks demand precision, privacy, or subtlety, such as typing a password, traditional controls may be better to stick with.

In this video, Frank Spillers explains how augmented reality uses spatial mapping to support natural, intuitive gestures that align with a user’s real environment.

2. Identify Appropriate Gesture Vocabulary: Simple, Intuitive, and Discoverable

Designing gestures means you’ll need to define a gesture set (known as a vocabulary) that users can learn, remember, and execute with ease. They’ll arrive on your product with preconceived ideas, mental models and conceptual models of how things should work. So:

Use natural physical metaphors such as pinching to zoom, swiping to scroll, and waving to dismiss. As gestures people already know from real life, they’re well worth implementing in future designs as users won’t even have to think about their meaning; they’ll just go ahead and make them.

Keep gestures simple and physical effort light: Don’t have users make large or strenuous motions; design for small, comfortable hand or finger movements to work well instead.

Ensure users can easily discover gestures: Provide visual or contextual hints so users know gestures exist. Hidden or undocumented gestures, even simple ones, can often go unused or come to light much later for users. Imagine how you might feel finding out by accident many months after using a product that a certain gesture does something you had to do using a long workaround.

Carefully evaluate gesture input properties, such as comfort, learnability, consistency, and robustness, under different conditions.

In this video, William Hudson, User Experience Strategist and Founder of Syntagm Ltd., explains how clear conceptual models help users understand your system, which is essential when you design gesture vocabularies that build on users’ existing expectations.

3. Pick the Right Sensing and Recognition Technology: Match Gesture to Hardware

Gesture-based interaction is only possible when the system can reliably detect and interpret human movement. Your choice of hardware and sensing technology affects what gestures work, and how well, and options include:

Touchscreens and touchpads for basic gestures like tap, pinch, and swipe.

Depth-sensing cameras, infrared sensors, and motion trackers for midair gestures, full-body or hand tracking, such as in AR and VR, smart TVs, or contactless UIs.

Wearables or motion controllers when fine-gesture detection or finger-level precision is needed.

Make sure the recognition system handles variability: different hand sizes, lighting conditions, user posture, speed of movement, and accidental gestures. For example, think of the potential for error and frustration if a user accidentally triggers a system response when all they meant to do was scratch an itch. Or what if your system didn’t register their movement the first time and they have to make a second or third, more exaggerated gesture? Well-designed gesture recognition depends on robust sensing, accurate modeling, and testing across real-world scenarios, so be sure to account for all three.

4. Provide Clear Feedback and Affordance: Make the Interface Communicative and Responsive

Because gestures often involve invisible or midair movements, users need immediate, understandable feedback to feel in control. When you design gesture-based interaction:

Visual or auditory cues (icons, highlights, and sound) help users know the system recognized their gesture successfully.

Provide real-time response as lag or misrecognition breaks the illusion of natural interaction; even a tiny fraction of a second can make all the difference between delight and frustration.

Indicate possible actions: for example, with overlay hints or subtle animation that invites gestures. Affordances, which you’ll find in the physical world in the form of door handles, for example, need to help users in their digital experiences, too.

This kind of feedback helps bridge the gap between physical motion and digital reaction, which makes gesture interaction feel natural and reliable for the user, who’ll feel more engaged in a seamless experience because of your considerate and empathetic design.

Enjoy this video, which explains how clear affordances and signifiers help users understand what actions are possible, which is essential for making gesture-based interfaces feel responsive and trustworthy.

5. Test with Real Users and Iterate across Contexts: Address Usability, Accuracy, and Accessibility

Since gesture-based interaction blurs the line between physical behavior and digital response, it makes testing in real contexts critical. A world filled with users with a diversity of abilities and more means you should include people with different body types, abilities, and use contexts. Think about lighting, seating, posture, and the various situations different people may encounter your design solution in. So, be sure to test for:

Accuracy and error rates, which includes testing for false positives and false negatives.

Physical fatigue or discomfort over time. Gesturing constantly, especially using midair, large, or high gestures, can cause fatigue or discomfort, so pick gestures that permit comfortable, repeatable motions. For example, just because a user doesn’t have to make a grand-sweeping arc or karate-chop the air in front of them doesn’t mean making many far gentler, less dramatic movements in a day won’t wear them out or cause some kind of muscle strain.

Discoverability and learnability: Do users discover gestures and remember them? Make them ultra-easy for users to find, learn, and take to.

User satisfaction: Does gesture interaction feel natural and usable compared with traditional interfaces? Does it bring users enough enjoyment, novelty, and emotional engagement, and help make a seamless experience all the better and more pleasurable?

A powerful way to stay one step ahead of your users and come out of testing with better results is to design using personas, research-based representations of real users. You can plug these personas into your design process, including through design mapping to shed light on even more valuable insights to help fine-tune your design solution.

In this video, William Hudson explains how design maps connect your personas to key design elements so you can make more informed design decisions.

6. Respect Accessibility, Inclusivity, and Alternative Control Methods: Provide Fallback Options

In certain contexts, gesture-based interaction can support accessibility; for example, by offering alternative input modes to users who have difficulty with keyboards or touchscreens. Even so, gestures can also present challenges for people with motor disabilities, so accessibility benefits depend on the specific user needs, gesture design, and system capabilities. Therefore:

Offer alternative input methods, such as buttons, voice, and touch, alongside gestures.

Support adjustable sensitivity, gesture customization, or gesture disabling for comfort and accessibility.

Consider motor disabilities, cultural differences, and user preferences when defining gesture sets.

In this video, Alan Dix, Author of the bestselling book “Human-Computer Interaction,” and Director of the Computational Foundry at Swansea University, explains how cultural differences shape what people can perceive and understand in an interface, reminding you to design gesture alternatives that respect diverse abilities and contexts.

Why Gesture-Based Interaction Matters and the Benefits of Getting It Right

Far into the future, gesture-based interaction will likely coexist with touch, voice, and other inputs, each used where it makes the most sense. When you design gesture-based interaction properly, you unlock a range of advantages for user experience, engagement, and future-ready, future-proofed interfaces. The benefits of gesture interaction design done well include some powerful reasons to stick with it, such as:

Intuitive, Natural Interaction: Lowering the Learning Curve

Humans have used gestures since prehistoric times, long before the first typewriters “decided” how your smartphone screen’s keypad looks. By leveraging gestures, something users already know, you reduce friction and increase approachability. Instead of having to learn new UI conventions, users choose hand and body movements they instinctively understand with spatial reasoning. Signaling with gestures makes interfaces feel more human, not machinelike.

Efficiency, Speed, Fluidity: Faster than Clicks or Taps

Gestures, such as swiping or pinching, can reduce the number of steps required for frequent tasks and may feel faster or more fluid in certain contexts. Note, though, that their efficiency depends on factors like gesture recognition accuracy, system latency, task complexity, and user familiarity.

Engagement, Hedonic Value, and Emotional Delight

Gesture-based interaction often feels more embodied and playful than traditional interfaces; physicality, immediacy, and novelty add layers of enjoyment, expressive ability, and personality. This emotional appeal can boost satisfaction, encourage exploration, and raise long-term user engagement as users get deeper into more delightful experiences in more expressive ways than just typing.

Accessibility and Inclusion: Offering Alternative Interaction Modes

Gesture-based input can serve as an alternative interaction method for users who find traditional inputs like keyboards or touchscreens difficult to use. However, for users with certain motor or cognitive disabilities, gesture input may introduce usability challenges unless you specifically design them with accessibility in mind. In any case, and as accessible design is the law in many parts of the world, too, accessible design is a particularly powerful consideration to “bake” into your interaction design process. Pick up some essential insights as this video explains why accessible design ensures that digital experiences remain usable and enjoyable for people with disabilities and other users, too.

Preparing for Future Interfaces: Beyond Screens and Buttons

As computing moves beyond phones and laptops and into AR/VR, smart environments, wearables, and ambient computing, gesture-based interaction design offers a path forward. It suits contexts where screens are inconvenient, unavailable, or inappropriate. While you might look at your smartphone’s screen with some disbelief on reading that, consider what UX design might look like in 20, 30, or even 40 years’ time. Sure, screens will likely still be essential ways to interact in many cases, but consider the exciting advancements of in-car systems, smart home control, and immersive spaces available now, too.

Exciting times lie ahead. Remember to design for thoughtfully integrated interactions, rather than “cool add-ons,” so users don’t feel that your interaction design based on gestures is gimmicky, intrusive, unnecessary, or too complex.

Overall, gesture-based interaction challenges you to think of interfaces not as flat canvases with buttons, but as entire living spaces of movement, physical intuition, and human behavior. When you design intentionally, interaction based on gestures becomes a powerful way to make technology feel natural, human, and alive.

Therefore, investigate how this form of interaction may help you design products that can impress and keep users coming back long into the future. Since gestures are timeless and intuitive, they’ll soon become more of a design reality with many more applications featuring this mode of interaction. Instead of featuring in science-fiction stories set a lifetime away, they’ll be within the grasp of so many more users, be it to order pizzas with, search for items, enjoy more realistic games: wherever you can take them, designer.