The Hawthorne effect is a bias where people temporarily change their behavior when they know they’re being observed. It often manifests as improved performance or exaggerated engagement but does not reflect normal behavior. UX (user experience) researchers and designers should understand and mitigate the effect regarding user behavior.

One way to validate your research data which hasn’t been affected by the Hawthorne effect is with triangulation. This is where you combine multiple methods, data sources, investigators, or theories to confirm and validate your findings. William Hudson, User Experience Strategist and Founder of Syntagm Ltd, explains more:

How the Hawthorne Effect Became a Concern for Design

The Hawthorne effect became a psychological term—and consequently a design concern—after studies at the Western Electric Hawthorne Works in Cicero, Illinois, U.S.A. in the 1920s. Researchers altered working conditions in the telecommunications equipment factory, including by changing lighting and adjusting break times. They found that worker productivity consistently improved regardless of the change. For example, even in poorer lighting, the test group outperformed the control group—the workers whose conditions remained unchanged.

In the 1950s, sociologist Henry A. Landsberger, who coined the term “the Hawthorne effect,” reinterpreted the results. Landsberger believed that the test-group participants were more productive because they had received unusual extra attention as they participated in the study. The changes in the work environment hadn’t caused the workers’ improved performance; the workers’ awareness of being studied had made them behave more productively.

Although later research has questioned the extent and validity of the Hawthorne effect, documentation of this effect has emerged across various settings, from medical trials to education, and, crucially, in UX and user research. This means designers—who already have to be mindful of biases when they consider users, data, and potential solutions—need to watch out for a Hawthorne effect UX phenomenon—and mitigate it.

Solid research is vital for design solutions that make the best sense to users—and make the best sense of what users experience, need, and desire in their everyday lives. Watch as William Hudson explains the basics of user research, and how to approach it step by step:

Designers Who Understand the Hawthorne Effect Do Better

The effect can seem advantageous for authority figures, from factory managers and business owners to teachers and parents. For example, imagine how a school classroom can become chaotic moments after a teacher briefly steps out—and quietens quickly when the students notice the teacher returning. Speed cameras are another example of how surveillance encourages good behavior.

However, the effect can sabotage design if researchers and designers fail to notice it, or if they let confirmation bias help it mask problems below the surface. For example, if designers have assumptions about what users want, they might look for things while observing users that confirm those assumptions—and ignore evidence that differs with what they believe. So, if users are already acting out of character during a user test, designers face a double disadvantage in that the “fake” behavior can lead them to look for and believe false things about the test prototype or solution. UX designers and researchers can run into the Hawthorne effect anywhere in the UX design process when they have contact with users—for example, in usability tests, field studies, or interviews. Anytime researchers observe users—and users know they’re under observation—the effect can arise and create problems for several reasons.

1. It Alters Authentic User Behavior

When test participants feel judged, even subtly, they may:

Try to impress the researcher or facilitator.

Avoid making mistakes, even if it means using workarounds.

Say what they think researchers want to hear.

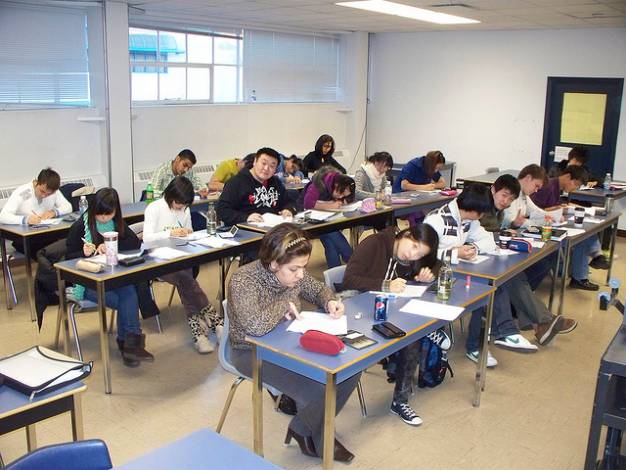

These behaviors pollute research findings, especially if the researcher or designer relies on a small sample size or tests high-stakes flows like onboarding, e-commerce checkouts, or data entry. The users don’t act like natural users but like students in exam conditions. To avoid potential conflict—or judgment—they put on a show where they strive to do what they think is “right,” and not what they’d do if they were alone with the design solution or prototype.

.jpg)

The sense of being watched can change everything, even how people perform the simplest actions.

© Photo by Burst, CC BY-SA 4.0

2. It Creates False Confidence in Design

For example, a user researcher might observe users breezing through a checkout flow during moderated tests and believe the website works well. However, if users are successful only because they feel pressure to perform, it’s a false positive and an unhelpful indicator. If the designer accepts the results as healthy signs of their website and the site goes live, the live product might see drop-offs, hesitation, or abandonment. The best designs must reflect great empathy for the user—and cater to how they truly think and feel.

Watch this video to understand more about how to design with empathy and advocate for users:

3. It Skews Feedback and Testing Metrics

Hawthorne-driven behavior may:

Inflate success rates in usability-related tasks.

Suppress negative feedback in interviews.

Cause underreporting of confusion or frustration.

These distortions can mislead teams into thinking their designs are more effective than they really are. For instance, if users pretend not to care about design issues that annoy or confuse them, they become actors. The moment they act “out of character” is when data and feedback can distort the real picture. If designers follow the distorted information, they can end up creating tools that don’t accurately reflect reality. For example, designers often rely on personas—fictitious representations of real users—to guide design decisions.

Watch William Hudson explain why design without personas falls short:

Where Do You Find the Hawthorne Effect in UX Research?

The effect can sneak into nearly every common UX research method:

Moderated Usability Tests

When researchers conduct usability tests which they or others moderate, users are acutely aware of observation and more likely won’t be “themselves.”

Contextual Inquiries

When researchers go to users in the latter’s “natural” environments, users know they’re part of a study and might adjust what they say or do to get a “passing grade.”

Think-Aloud Protocols

When researchers ask users to think out loud so they declare how they approach a problem or use a design, users may verbalize what they believe sounds smart rather than their genuine thoughts.

Eye-Tracking Studies

If users have equipment attached to them, such as eye-tracking gear to see where they look at a screen and for how long, they’ll have a heightened awareness of being watched. This may make them more likely to act like “device-wearers” than naturally behaving individuals.

Surveys and Interviews

Participants may answer questions to please the interviewer or align with perceived expectations. It could be because they want to be “nice” or “conventional,” or because they don’t want to explain an issue they might have—they might not want to bother bringing it up, or they might fear they’ll sound “stupid” if they do. Sometimes, and especially if the study concerns potentially negative or unhealthy behavior, they’ll distort facts to avoid potential judgment, such as: “I only drink a couple of beers on the weekend.”

Discover points to watch out for as Ann Blandford: Professor of Human-Computer Interaction at University College London explains pros and cons of user interviews.

How to Mitigate the Hawthorne Effect in UX Research

While UX researchers and designers can’t eliminate the Hawthorne effect entirely, they can design smarter studies that reduce its influence.

1. Normalize the Observation

Over time, people typically become desensitized to having someone watch them. So, structure sessions that:

Include a warm-up task to ease users in.

Last long enough to let “performance mode” fade.

Repeat over multiple days or touchpoints, if possible.

This helps behavior become more natural as the novelty wears off.

Explore helpful techniques for how to approach users in this video with Ann Blandford:

2. Use Remote and Asynchronous Tools

Unmoderated tools like Maze or UserTesting allow users to interact in their own environments. They’re less conscious of scrutiny, which yields more authentic interactions.

Better still, these platforms let you test at scale, which helps you more easily spot outliers and detect patterns that emerge only in real-world contexts.

3. Set Expectations Thoughtfully

The language you use during introductions sets the tone. Don’t word things in any way that might remind users of school exams, driving tests, or the like. Instead, try this script:

“We’re not testing you; we’re testing our design. If something’s unclear or frustrating, that’s a sign we need to improve it—not that you’re doing anything wrong.”

This reminder lowers pressure and increases honesty. It helps users drop their guard and act casually—secure in the knowledge that it’s all about the design.

4. Use Naturalistic Environments

Solid ethnographic research can bring precious insights, so observe users using the product:

In their own homes.

During actual tasks.

On their preferred devices.

Avoid artificial lab setups unless you have no alternative.

If you can’t observe users directly, cultural probes offer a great way to get authentic insights from them, as Alan Dix, Author of the bestselling book “Human-Computer Interaction” and Director of the Computational Foundry at Swansea University, explains:

5. Anonymize Feedback Where Appropriate

Let users respond anonymously if you must gather sensitive insights. They’re more likely to express honest opinions about confusing UIs, broken features, or irritating workflows when they know their identities are shielded.

Discover important points about data collection in this video:

6. Watch for Contradictions

If a user says something positive but behaves hesitantly, trust the behavior rather than their words. Their slower actions are likely to have come through the filter of social desirability.

7. Apply Methodological Rigor

Use caution when having contact with users. For example, well-planned longer sessions that put users at ease can help expose valuable insights, but remember to stay quietly observant and let them feel the absence of a “spotlight” on them.

8. Use Triangulation

Confirm and validate your findings with other methods, such as surveys and analytics to complement interviews. You have a wealth of ways to approach what you learn about users through qualitative research and quantitative research. UX researchers frequently use methodological triangulation like this to back up their findings.

Pick up valuable insights about the difference between qualitative and quantitative research, both essential ways to learn about users, as William Hudson explains:

Case Example: Remote Testing Beats Moderated Labs

An SaaS company redesigned its dashboard based on lab testing insights. In moderated sessions, users easily found and used a “Create Project” button. However, in production, few users clicked it. Conversion stalled.

The team retraced their steps and ran unmoderated tests using a tool like PlaybookUX. Without an observer present, users struggled to locate the button—it was above the fold, far from where their mouse naturally moved.

The conclusion—the Hawthorne Effect had inflated performance during observed sessions. Users had “performed well” under the spotlight, not because the design was intuitive, but because they were extra focused.

This insight helped the team redesign the interface, increasing engagement by 42%.

Consider the Psychology behind the Hawthorne Effect

Help your research efforts—and what you design for your brand and users—and remember the complexity of the human mind when you approach users. The Hawthorne effect shares traits with:

Social desirability bias: The tendency to say or do what seems most acceptable.

Evaluation apprehension: Anxiety about being judged or evaluated.

Demand characteristics: Subtle cues that lead participants to guess the purpose of a study and act accordingly.

Together, these influences form a complex web of cognitive bias that researchers must navigate. For example, a researcher who approaches users as part of research towards a brand’s meditation or calm-inducing app might want to consider how answers like “I have fairly normal stress levels” might paint a different picture of the user’s true mental state—and skew the feedback they give. Perhaps they’re concerned about sounding neurotic or worried about divulging that they hate their job—all because they think an interview about stress management means questions about triggers. In any case, the researchers’ best defenses are awareness, humility, and triangulation.

Explore how awareness of users’ contexts helps to create designs that meet them on their level as Alan Dix explains:

Ethical Considerations: Transparency Without Compromise

Twenty-first-century people already inhabit a heavily recorded world—a fact of life with not just CCTV cameras recording for security purposes but also numerous smartphones, doorbell cameras, and other devices capturing sights and sounds frequently throughout a typical day. Even if they’re not the center of attention in the recording, the fact that a device may be recording nearby can be enough to make camera consciousness become camera anxiety for some people. Few individuals like to be caught on film doing or saying the wrong thing. Consider this when you ask users about matters in recorded interviews.

Always ask users for consent to be recorded and be open and transparent about the recording’s use/s afterwards. In any case, researchers who consider a question like “Can I avoid disclosing what I’m testing to avoid bias?” should be quick to answer with a “no.” To mask study goals may help reduce demand characteristics, but full ethical transparency is non-negotiable.

Let users know:

They’re being observed and, if applicable, recorded.

Their feedback is valued, not judged.

Their data is safe and anonymized.

Ethical research builds long-term trust—both with users and stakeholders.

Find essential points about how to plan an observational study in this video:

Above all, insight, humility, skill, and empathy can help researchers lessen the Hawthorne effect’s potential to derail design efforts. Humans are self-conscious social beings. In UX design, this insight is both a caution to tread carefully and an opportunity to access the truth of how users think, feel, and act.

Beware of the “watched = botched” dynamic; people won’t declare when they’re not being frank. You can’t know what they might be thinking deep down. “I can’t do this when you’re watching!” may be too awkward to come out as words, but it might in other ways, such as a user’s frustration after they’ve rushed an action—because they felt self-conscious and “under the microscope.” Maybe they’re afraid of feeling stupid if they can’t use your design or prototype—an unfair self-judgment that arises needlessly—and need you to reassure them.

Most importantly, stay humble. What users say—and even what they show—might not fully represent what they truly experience. Designers have a golden opportunity to bridge that gap, observe wisely, and design with empathy rooted in reality. UX design thrives on authenticity so designers can create experiences that reflect real-world needs—not staged performances. That’s not just good research—it’s great design.