Augmented reality has come a long way from a science-fiction concept to a science-based reality. Until recently the costs of augmented reality were so substantial that designers could only dream of working on design projects that involved it – today things have changed and augmented reality is even available on the mobile handset. That means design for augmented reality is now an option for all shapes and sizes of UX designers.

Augmented reality is a view of the real, physical world in which elements are enhanced by computer-generated input. These inputs may range from sound to video, to graphics to GPS overlays and more. The first conception of augmented reality occurred in a novel by Frank L Baum written in 1901 in which a set of electronic glasses mapped data onto people; it was called a “character marker”. Today, augmented reality is a real thing and not a science-fiction concept.

A Brief History of Augmented Reality (The Past)

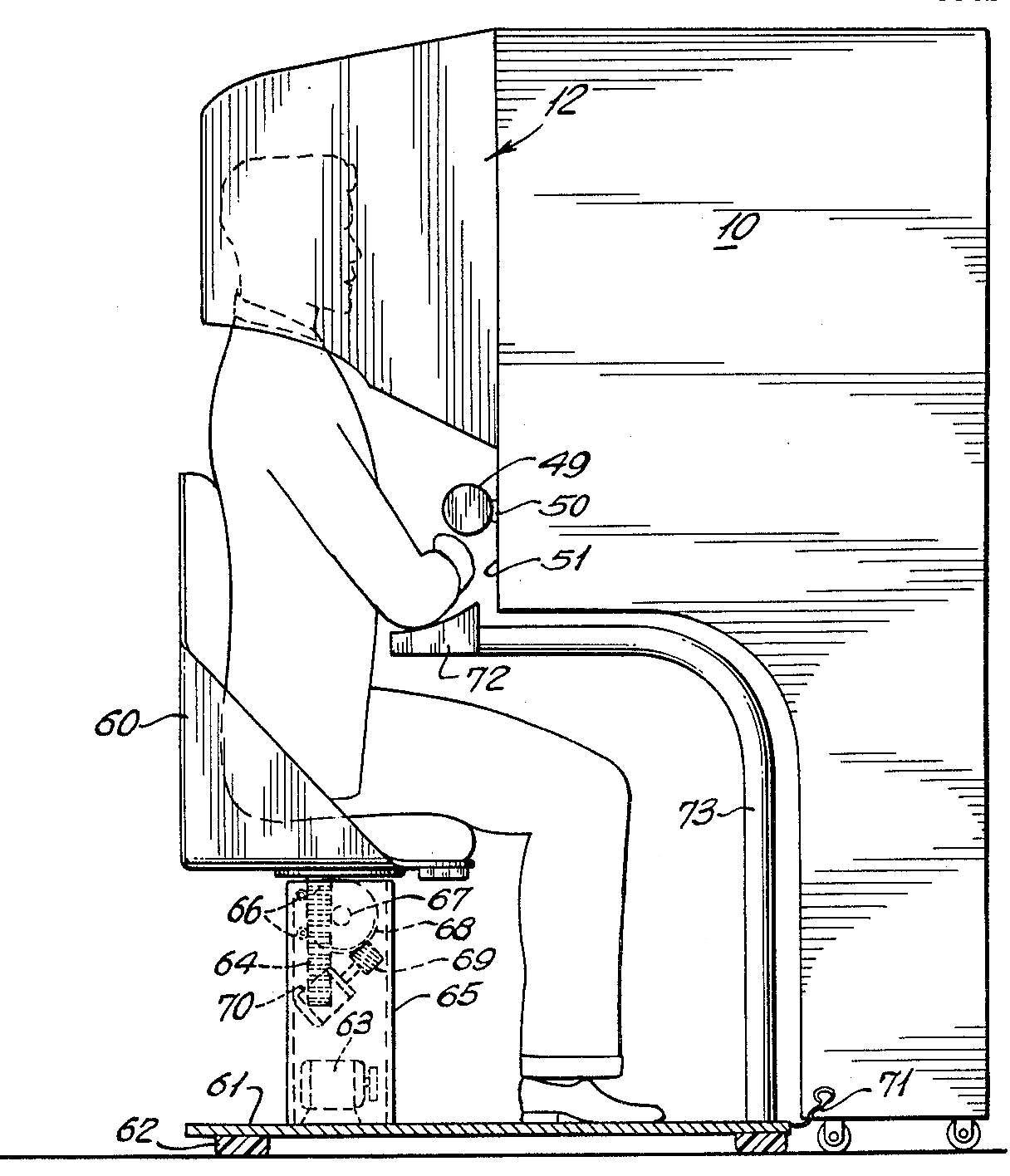

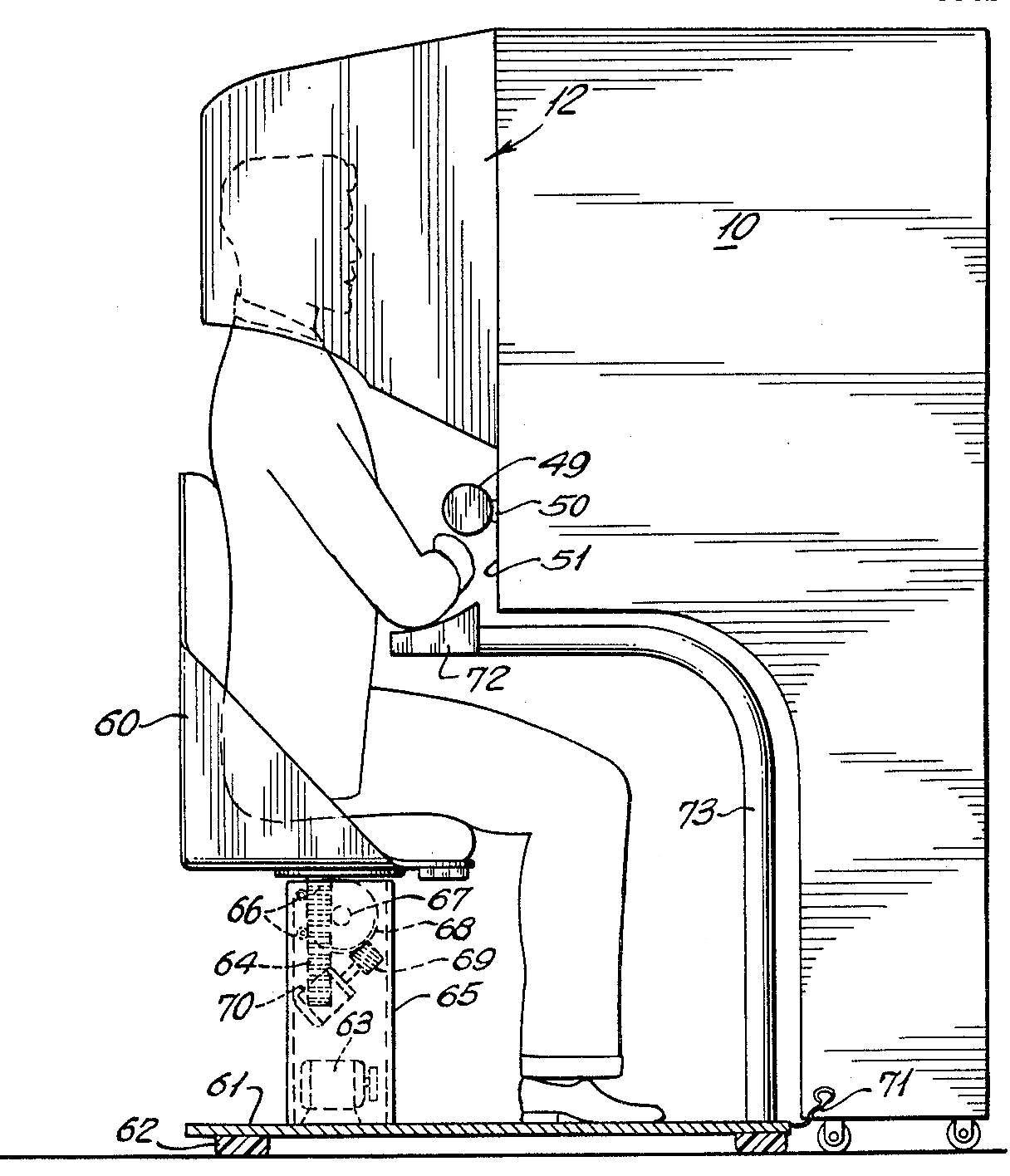

Augmented reality was first achieved, to some extent, by a cinematographer called Morton Heilig in 1957. He invented the Sensorama which delivered visuals, sounds, vibration and smell to the viewer. Of course, it wasn’t computer controlled but it was the first example of an attempt at adding additional data to an experience.

Author/Copyright holder: Morton Heilig. Copyright terms and license: Public Domain.

Then in 1968, Ivan Sutherland the American computer scientist and early Internet influence, invented the head-mounted display as a kind of window into a virtual world. The technology used at the time made the invention impractical for mass use.

In 1975, Myron Krueger, an American computer artist developed the first “virtual reality” interface in the form of “Videoplace” which allowed its users to manipulate and interact with virtual objects and to do so in real-time.

Steve Mann, a computational photography researcher, gave the world wearable computing in 1980.

Of course back then these weren’t “virtual reality” or “augmented reality” because virtual reality was coined by Jaron Lainer in 1989 and Thomas P Caudell of Boeing coined the phrase “augmented reality” in 1990.

The first properly functioning AR system was probably the one developed at USAF Armstrong’s Research Lab by Louis Rosenberg in 1992. This was called Virtual Fixtures and was an incredibly complex robotic system which was designed to compensate for the lack of high-speed 3D graphics processing power in the early 90s. It enabled the overlay of sensory information on a workspace to improve human productivity

There were many other breakthroughs in augmented reality between here and today; the most notable of which include:

Bruce Thomas developing an outdoor mobile AR game called ARQuake in 2000

ARToolkit (a design tool) being made available in Adobe Flash in 2009

Google announcing its open beta of Google Glass (a project with mixed successes) in 2013

Microsoft announcing augmented reality support and their augmented reality headset HoloLens in 2015

The Current State of Play in Augmented Reality (The Present)

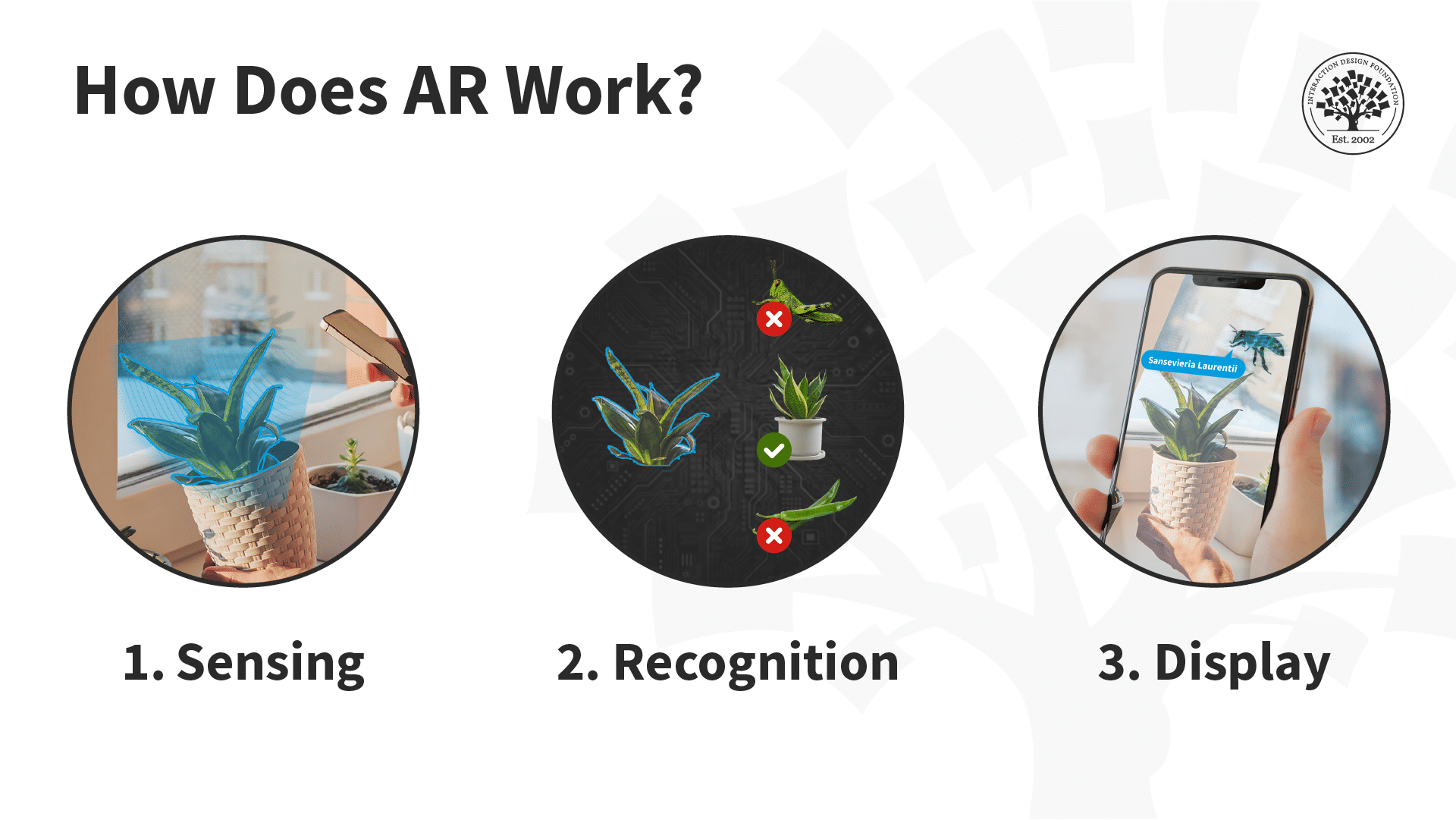

Augmented reality is achieved through a variety of technological innovations; these can be implemented on their own or in conjunction with each other to create augmented reality. They include:

General hardware components – the processor, the display, the sensors and input devices. Typically a smartphone contains a processor, a display, accelerometers, GPS, camera, microphone etc. and contains all the hardware required to be an AR device.

Displays – while a monitor is perfectly capable of displaying AR data there are other systems such as optical projection systems, head-mounted displays, eyeglasses, contact lenses, the HUD (heads up display), virtual retinal displays, EyeTap (a device which changes the rays of light captured from the environment and substitutes them with computer generated ones),Spatial Augmented Reality (SAR – which uses ordinary projection techniques as a substitute for a display of any kind) and handheld displays.

Sensors and input devices include – GPS, gyroscopes, accelerometers, compasses, RFID, wireless sensors, touch recognition, speech recognition, eye tracking and peripherals.

Software – the majority of development for AR will be in developing further software to take advantage of the hardware capabilities. There is already an Augmented Reality Markup Language (ARML) which is being used to standardize XML grammar for virtual reality. There are several software development kits (SDK) which also offer simple environments for AR development.

There are apps available for or being researched for AR in nearly every industrial sector including:

Archaeology, Art, Architecture

Commerce, Office

Construction, Industrial Design

Education, Translation

Emergency Management, Disaster Recovery, Medical and Search and Rescue

Games, Sports, Entertainment, Tourism

Military

Navigation

Author/Copyright holder: Sonk54. Copyright terms and license: CC BY-SA 3.0

The Future of Augmented Reality

Jessica Lowry, a UX Designer, writing for the Next Web says that AR is the future of design and we tend to agree. Already mobile phones are such an integral part of our lives that they might as well be extensions of our bodies; as technology can be further integrated into our lives without being intrusive (a la Google Glass) – it is a certainty that augmented reality provides opportunities to enhance user experiences beyond measure.

This will almost certainly see major advances in the much-hyped but still little seen; Internet of Things. UX designers in the AR field will need to seriously consider the questions of how traditional experiences can be improved through AR – just making your cooker capable of using computer enhancements is not enough; it needs to healthier eating or better cooked food for users to care.

The future will belong to AR when it improves task efficiency or the quality of the output of an experience for the user. This is the key challenge of the 21st century UX profession.

Author/Copyright holder: Austin Berner. Copyright terms and license: Public Domain

The Takeaway

AR or augmented reality has gone from pipe dream to reality in just over a century. There are many AR applications in use or under development today, however – the concept will only take off universally when UX designers think about how they can integrate AR with daily life to improve productivity, efficiency or quality of experiences. There is an unlimited potential for AR, the big question is - how will it be unlocked?

References & Where to Learn More:

DID L. FRANK BAUM PREDICT AUGMENTED REALITY OR WARN US ABOUT ITS POWER? Some food for thought.

Ivan Sutherland’s research can be found here: http://90.146.8.18/en/archiv_files/19902/E1990b_123.pdf

Steve Mann’s research can be read here: "Eye Am a Camera: Surveillance and Sousveillance in the Glassage". Techland.time.com

Rosenberg’s original research paper was published as: L. B. Rosenberg. The Use of Virtual Fixtures As Perceptual Overlays to Enhance Operator Performance in Remote Environments. Technical Report AL-TR-0089, USAF Armstrong Laboratory, Wright-Patterson AFB OH, 1992.

Find out more about ARQuake at Wiki.

Learn more about Google Glass at the New York Times.

Jessica Lowry’s article: Augmented reality is the future of design

Hero Image: Author/Copyright holder: Maurizio Pesce. Copyright terms and license: CC BY 2.0