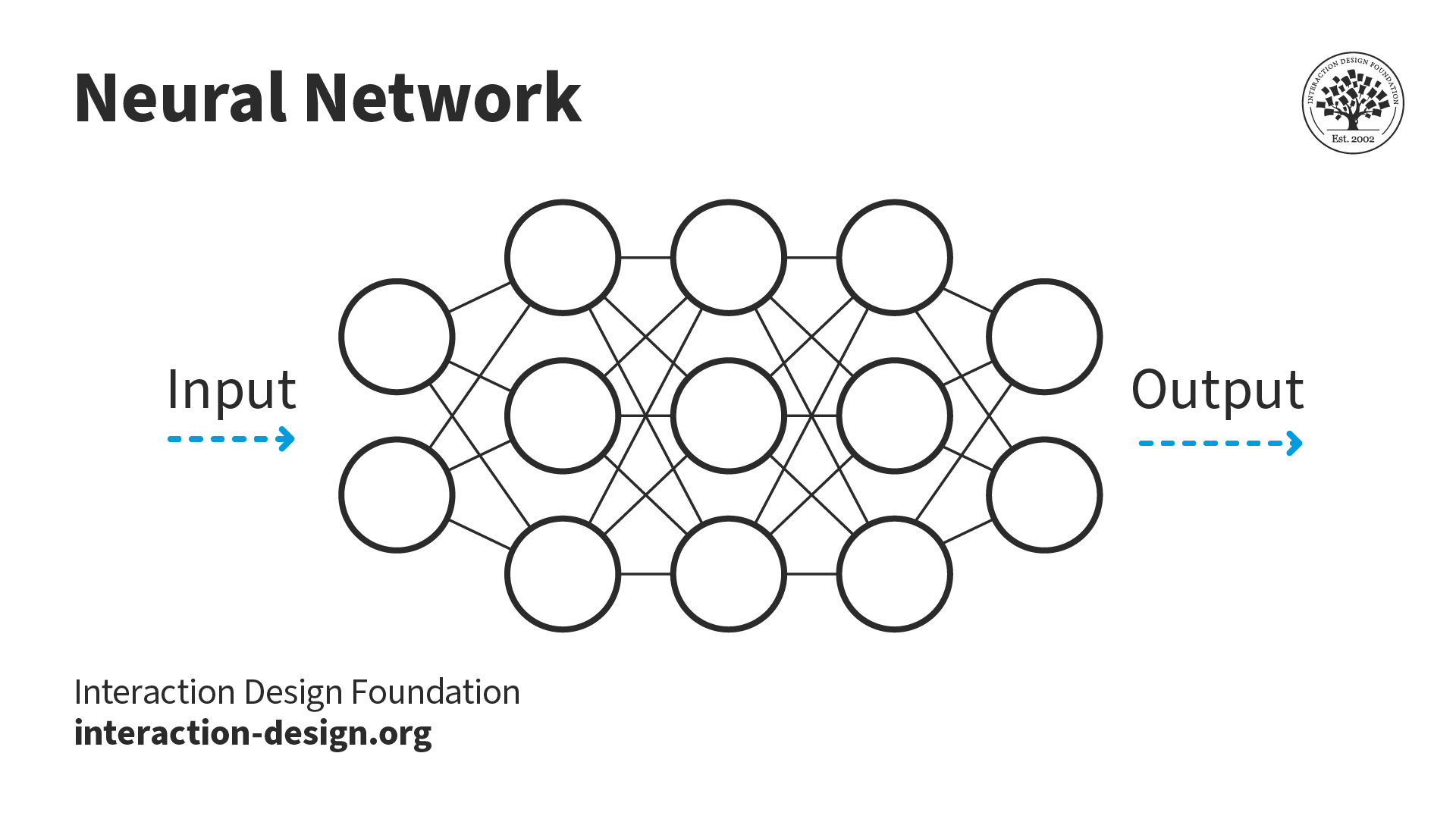

Neural networks are a type of artificial intelligence that can learn from data and perform various tasks, such as recognizing faces, translating languages, playing games, and more. Neural networks are inspired by the structure and function of the human brain, which consists of billions of interconnected cells called neurons. Neural networks are made up of layers of artificial neurons that process and transmit information between each other. Each neuron has a weight and a threshold that determine how much it contributes to the output of the next layer. Neural networks can be trained using different algorithms, such as backpropagation, gradient descent, or genetic algorithms. Neural networks can also have different architectures, such as feedforward, recurrent, convolutional, or generative adversarial networks. Neural networks are powerful tools for artificial intelligence because they can adapt to new data and situations, generalize from previous examples, and discover hidden patterns and features in the data.

Neural networks consist of input and output layers (far left and right) as well as intermediary hidden layers.

© Interaction Design Foundation, CC BY-SA 4.0

History of Neural Networks in AI

Neural networks are one of the main tools and methods used in AI research and applications. Here is a brief history of neural networks in AI:

1943: Walter Pitts and Warren McCulloch propose a mathematical model of artificial neurons that can perform logical operations.

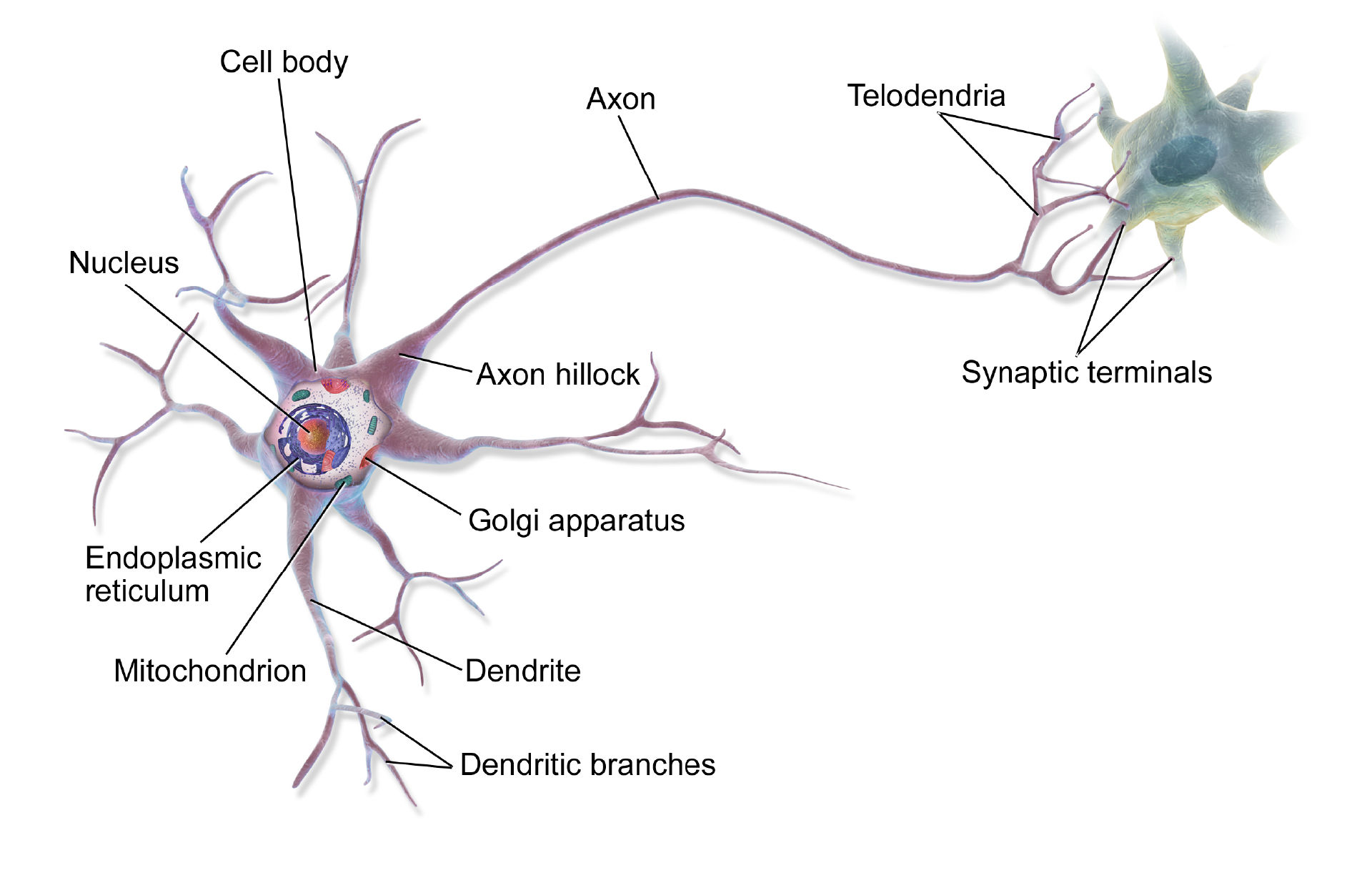

Diagram of a human neuron. Signals are received through the dendrites (left) and sent out through the axon (right).

CC BY-SA 4.0 ShareAlike (https://creativecommons.org/licenses/by-sa/4.0/)

Diagram of a human neuron. Signals are received through the dendrites (left) and sent out through the axon (right).

© Interaction Design Foundation, CC BY-SA 4.0

1957: Frank Rosenblatt developed the perceptron, a single-layer neural network that can learn to classify linearly separable patterns.

1969: Marvin Minsky and Seymour Papert publish a book called Perceptrons, which shows the limitations of single-layer neural networks and discourages further research.

1970: Seppo Linnainmaa introduces the backpropagation algorithm, which can efficiently compute the gradients of a multi-layer neural network.

1980: Kunihiko Fukushima proposes the neocognitron, a hierarchical neural network that can recognize handwritten digits and other patterns.

1982: John Hopfield introduces the Hopfield network, a recurrent neural network that can store and retrieve patterns as attractors of its dynamics.

1986: David Rumelhart, Geoffrey Hinton, and Ronald Williams popularize the backpropagation algorithm and demonstrate its applications to various problems such as speech recognition, computer vision, and natural language processing.

1990s: Neural networks face a decline in interest due to the emergence of other machine learning methods, such as support vector machines and the lack of computational resources and data to train large-scale models.

2006: Geoffrey Hinton, Yann LeCun, Yoshua Bengio, and others revive the interest in deep learning by showing that pre-training neural networks layer by layer can overcome the problem of vanishing gradients and improve their performance.

2012: Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton win the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) by using a deep convolutional neural network (CNN) that achieves a significant improvement over previous methods.

2014: Ian Goodfellow, Yoshua Bengio, and Aaron Courville publish a book called Deep Learning, which provides a comprehensive overview of the theory and practice of deep learning.

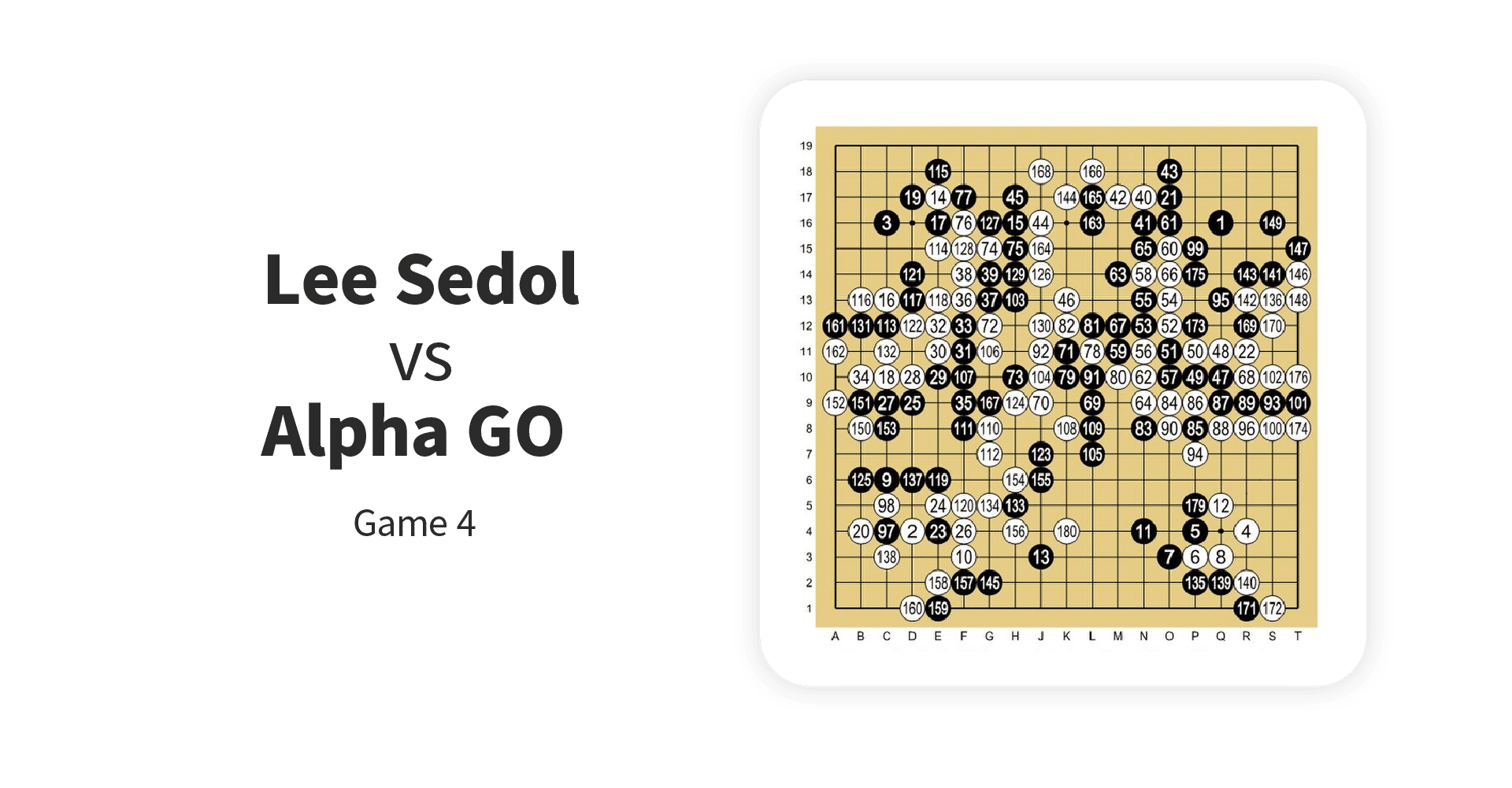

2015: Google DeepMind develops AlphaGo, a deep reinforcement learning system that defeats the world champion of Go, a complex board game that requires human intuition and creativity.

2016: Google Translate launches a new version based on neural machine translation (NMT), which uses an encoder-decoder architecture with attention mechanisms to translate sentences between different languages.

2017: Facebook develops DeepFace, a deep face recognition system that achieves near-human accuracy on the Labeled Faces in the Wild (LFW) dataset.

2018: OpenAI develops GPT, a large-scale generative pre-trained transformer model that can generate coherent and diverse text on various topics.

2019: Google develops BERT, a bidirectional encoder representation from transformers model that achieves state-of-the-art results on several natural language understanding tasks such as question answering, sentiment analysis, and named entity recognition.

Applications of Neural Networks

Neural networks have many applications in various domains, such as:

Image recognition: Neural networks can recognize and classify objects, faces, scenes, and activities in images. For example, Facebook uses a deep face recognition system called DeepFace that achieves near-human accuracy on the Labeled Faces in the Wild (LFW) dataset.

Speech recognition: Neural networks can convert spoken words into text or commands. For example, Google Assistant uses a neural network to understand natural language queries and provide relevant answers.

Machine translation: Neural networks can translate sentences or documents between different languages. For example, Google Translate uses a neural machine translation (NMT) system with an encoder-decoder architecture with attention mechanisms to translate sentences between different languages.

Screenshot of Google Translate. This example used to appear in some printed phrasebooks!

Google Translate, Fair Use

Natural language processing: Neural networks can analyze and generate natural language texts for various purposes, such as sentiment analysis, question answering, text summarization, and text generation. For example, OpenAI developed GPT, a large-scale generative pre-trained transformer model that can generate coherent and diverse text on various topics in response to AI prompts.

Medical diagnosis: Neural networks can diagnose diseases or conditions based on symptoms, tests, or images. For example, IBM Watson uses a neural network to analyze medical records and provide diagnosis and treatment recommendations for cancer patients.

Financial forecasting: Neural networks can predict future trends or outcomes based on historical data or market signals. For example, JPMorgan Chase uses a neural network to forecast the stock market movements and optimize its trading strategies.

Quality control: Neural networks can detect defects or anomalies in products or processes based on visual inspection or sensor data. For example, Tesla uses a neural network to monitor the quality of its electric vehicles and identify any issues or faults.

Gaming: Neural networks can play games or simulate realistic environments based on rules or rewards. For example, Google DeepMind developed AlphaGo, a deep reinforcement learning system that defeated the world champion of Go, a complex board game that requires human intuition and creativity.

Screenshot of AlphaGo versus Lee Sedol in 2016. AlphaGo won four out of the five games played.

CC BY-SA 4.0 ShareAlike (https://creativecommons.org/licenses/by-sa/4.0/)

Future of Neural Networks in Deep Learning

Some of the possible directions for the future of neural networks in deep learning are:

Neuroevolution: This is the application of evolutionary algorithms (EAs) to the configuration and optimization of neural networks. EAs are a type of optimization technique that mimic the process of natural selection and evolution. Neuroevolution can help to find optimal neural network architectures, hyperparameters, weights, and activation functions without human intervention.

Symbolic AI: This integrates symbolic artificial intelligence (AI) with neural networks. Symbolic AI is a type of AI that uses logic, rules, symbols, and knowledge representation to perform reasoning and inference. Symbolic AI can help to overcome some of the limitations of neural networks, such as explainability, generalization, robustness, and causality. Some researchers argue that neural networks will eventually move past their shortcomings without help from symbolic AI, but rather by developing better architectures and learning algorithms.

Generative models: These neural networks can generate new data or content based on existing data or content. Generative models can be used for various purposes, such as data augmentation, image synthesis, text generation, style transfer, etc. Generative adversarial networks (GANs) are a popular type of generative model that use two competing neural networks: a generator that tries to create realistic data and a discriminator that tries to distinguish between real and fake data.